NVIDIA Blackwell B200

Source: NVIDIA Technical Blog (modified)

Many scientific applications require 64-bit "double precision" (or FP64) for their floating point calculations. NVIDIA was one of the first GPU manufacturers to recognize this need and meet it in 2007 through its Tesla line of HPC components. This turned out to be a good business move, as 18 years later, NVIDIA accelerators are found in 87% of all supercomputers in the Top500 list and account for 55% of total performance (as of November 2025, when selected by Accelerator/CP Family).

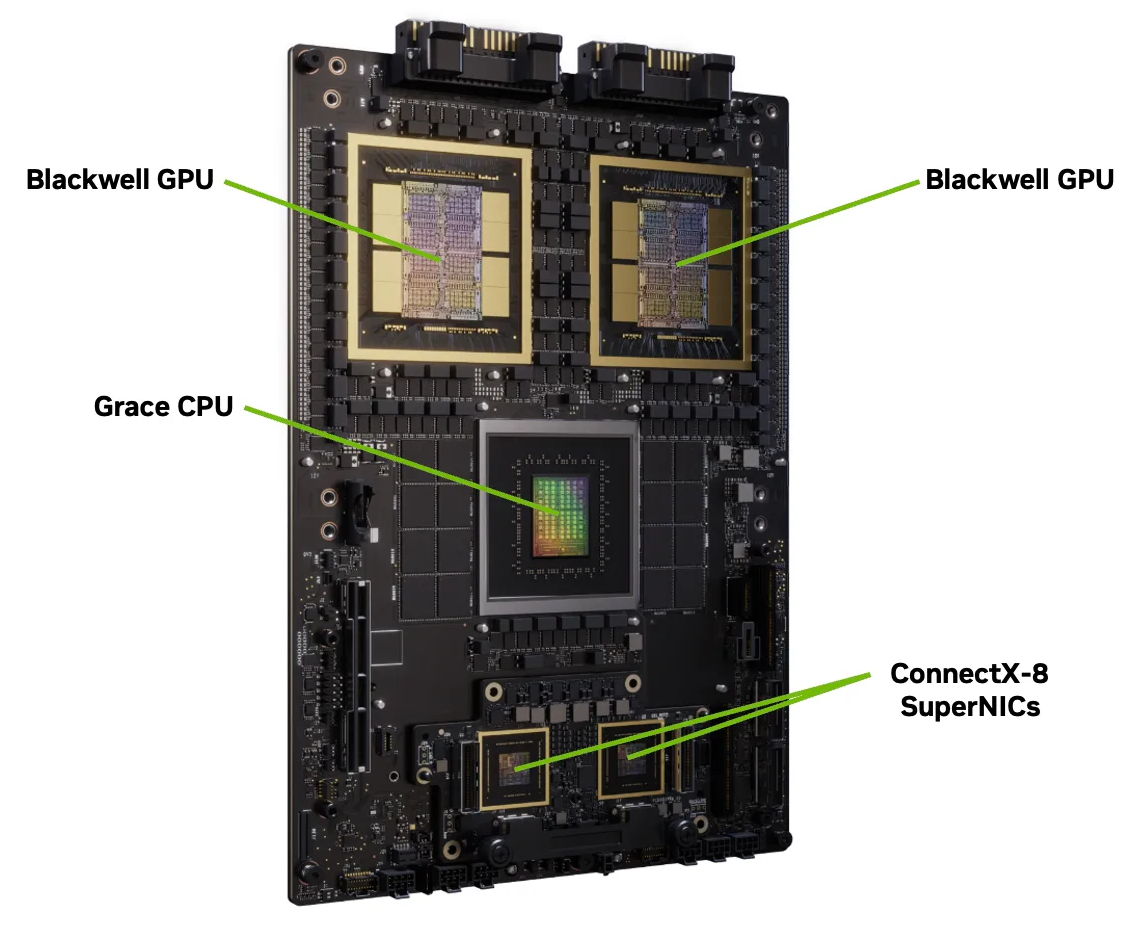

Joining these systems is TACC's Horizon, a leadership-class supercomputer that incorporates 4,000 NVIDIA GPUs through its "Grace Blackwell" compute nodes. The Grace Blackwell Superchip (pictured here) consists of 2 Blackwell GPUs coupled to each other and to 1 Grace CPU. NVIDIA encloses a couple of these Grace Blackwell Superchips in a GB200 NVL4 rack-mounted server, which serves as the building block of Horizon's GPU capacity.

Note that the GPU model in Horizon's GB200 Superchips is the Blackwell B200 rather than the Blackwell Ultra B300 found in the GB300 Superchip. The B200 has superior FP64 performance compared to the B300; the latter trades off FP64 for improved reduced-precision performance that is more appropriate for pure-AI workloads. This makes the Blackwell B200 a better choice for supporting general-purpose GPU (GPGPU) workloads.

We'll be taking an in-depth look at the capabilities of a single Blackwell B200 GPU, bearing in mind that two of them are found in every GB200 Superchip. The B200 gets its FP64 computer power from its 10,240 double precision CUDA cores, all of which can execute a fused multiply-add (FMA) on every cycle. This gives the B200 a peak double precision (FP64) floating-point performance of 40 teraflop/s, computed as follows:

\[10,240 \text{ FP64 CUDA cores } \times 2{\frac{\text{flop}}{\text{core}\cdot\text{cycle}}} \times 1.965{\frac{\text{Gcycle}}{\text{s}}} \approx 40 {\frac{\text{Tflop}}{\text{s}}}\]The factor of 2 flop/core/cycle comes from the ability of each core to execute FMA instructions. The B200's peak rate for single precision (FP32) floating-point calculations is even higher, as it has twice as many FP32 CUDA cores as FP64. Therefore, its peak FP32 rate it is exactly double the above:

\[20,480 \text{ FP32 CUDA cores } \times 2{\frac{\text{flop}}{\text{core}\cdot\text{cycle}}} \times 1.965{\frac{\text{Gcycle}}{\text{s}}} \approx 80 {\frac{\text{Tflop}}{\text{s}}}\]It is interesting to compare the B200's peak FP64 rate to that of the companion Grace processor in the GB200, assuming the latter runs at its maximum "Turbo Boost" frequency on all 72 cores, with four 128-bit vector units per core doing FMAs on every cycle:

\[72 \text{ cores}\ \times 4 \frac{\text{ VPUs}}{\text{core}} \times 2 \frac{\text{ FP64-lanes}}{\text{VPU}} \times 2{\frac{\text{flop}}{\text{lane}\cdot\text{cycle}}} \times 3.44 \frac{\text{ Gcycle}}{\text{s}} \approx 4.0 \frac{\text{ Tflop}}{\text{s}} \]Clearly, the B200 has quite an advantage for highly parallel, flop-heavy calculations, even in double precision.

The Blackwell architecture, like all NVIDIA's GPU designs, is built around a scalable array of Streaming Multiprocessors (SMs) that are individually and collectively responsible for executing many threads. Each SM contains an assortment of CUDA cores for handling different types of data, including FP32 and FP64. The CUDA cores within an SM are responsible for processing the threads by executing arithmetic and other operations synchronously on warp-sized groups of the various datatypes. As we shall see, Blackwell SMs also feature special tensor cores that are effective at accelerating reduced-precision arithmetic.

Given the large number of CUDA cores, it is clear that to utilize the device fully, many thousands of SIMT threads need to be launched by an application. This implies that the application must be amenable to an extreme degree of fine-grained parallelism.

CVW material development is supported by NSF OAC awards 1854828, 2321040, 2323116 (UT Austin) and 2005506 (Indiana University)