Tensor Cores, Fifth Generation

Matrix multiplications lie at the heart of Convolutional Neural Networks (CNNs) which are essential to AI/ML applications. Both training and inferencing require the multiplication of a series of matrices that hold the input data and the optimized weights of the connections between the layers of the neural net. The Tesla V100 was NVIDIA's first product to include tensor cores to perform such matrix multiplications very quickly; subsequent generations of NVIDIA GPUs have expanded this capability. Assuming that reduced-precision (e.g., FP16, FP8, even FP4) representations are adequate for the matrices being multiplied, CUDA 9.1 and later use tensor cores whenever possible to do the convolutions. Given that very large matrices may be involved, tensor cores can greatly improve the training and inference speed of AI/ML models that rely on CNNs.

The Blackwell B200 has 640 fifth-generation tensor cores. There are 4 of these in each of its 160 SMs, arranged so that each of the 4 processing blocks in an SM holds one. A single B200 tensor core can perform up to 512 half-precision FMA operations per clock cycle, so the 4 tensor cores in one SM can perform 2048 FMAs (4096 individual floating point operations!) per clock cycle.

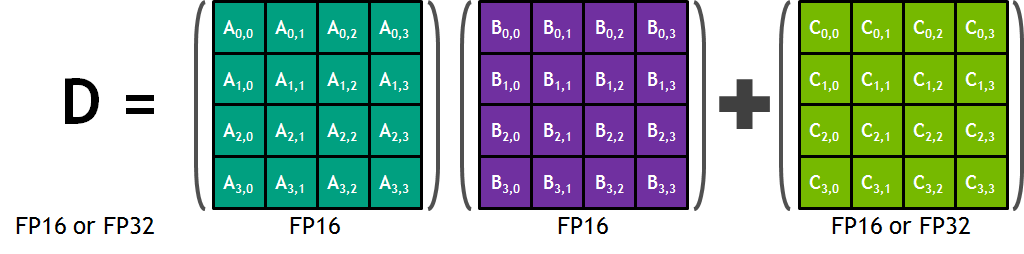

If we go back to the first-generation tensor core, its basic role is to perform the following operation on 4x4 matrices:

\[ \text{D} = \text{A} \times \text{B} + \text{C} \]In this formula, the inputs A and B are FP16 matrices, while the input and accumulation matrices C and D may be FP16 or FP32 matrices (see the figure below).

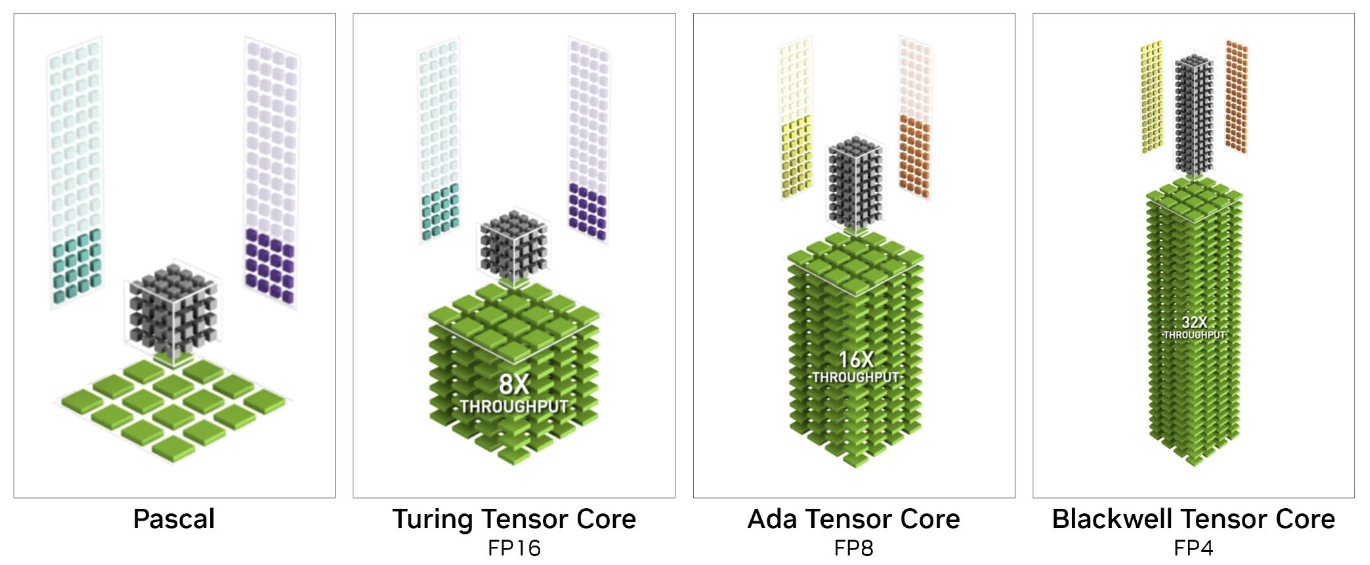

NVIDIA has come up with 3D visualizations of the action performed by its tensor cores, as well as the improvements it has made in each generation; these are shown in the next figure. The overall aim of the illustration is to show how a tensor core can perform fully parallelized 4x4 matrix operations for different types of reduced-precision matrices. The illustration isn't very easy to understand at a glance, so here is an attempt to describe it in words.

In each frame, the two matrices to be multiplied, A and B, are depicted outside the central cube or stack (note, matrix A on the left is transposed). The gray central cube in the first two frames represents the 64 element-wise products required to generate a full 4x4 product matrix. Summing the individual products in the cube along each vertical column produces the desired matrix product, shown in green below the cube.

In the Pascal generation and prior, the necessary arithmetic had to be handled in normal fashion, through warps of threads executing on CUDA cores. In first- and second-generation tensor cores, which were introduced with Volta and Turing, the same set of operations can occur much more quickly. Imagine all 64 blocks within the central cube "lighting up" at once, as pairs of input elements are instantaneously multiplied together along horizontal layers, then instantaneously summed along vertical lines. As a result, one whole product matrix (A times B, transposed) drops down onto the top of the pile, where it is summed with matrix C (transposed), outlined in white. Upon summation it becomes the next output matrix D and is pushed down onto the stack of results. Prior output matrices are shown piling up below the cube, beneath the latest output matrix D (all transposed).

Source: NVIDIA's NVIDIA RTX Blackwell GPU Architecture

Subsequent generations of NVIDIA GPUs enable similar matrix operations to be executed in parallel on arrays that are larger in size, but further reduced in numerical precision (number of bits). The Ada generation doubles the size of the matrices to (4x8)x(8x4), while halving the numerical precision to FP8. The Blackwell generation repeats the pattern, enabling the multiplication of (4x16)x(16x4) matrices having FP4 precision.

The reality of how a tensor core works is undoubtedly much more complicated than the frames of the illustration suggest. Probably it involves a multi-stage FMA pipeline that progresses downward layer by layer. One then envisions successive C matrices dropping in from the top to accumulate the partial sums of the products at each layer.

CVW material development is supported by NSF OAC awards 1854828, 2321040, 2323116 (UT Austin) and 2005506 (Indiana University)